The 9th issue of the Wing Inflight Magazine.

7 posts tagged with "cloud-oriented"

View All TagsIn Search for Winglang Middleware Part One

Introducing a new programming language that creates an opportunity and an obligation to reevaluate existing methodologies, solutions, and the entire ecosystem—from language syntax and toolchain to the standard library through the lens of first principles.

Simply lifting and shifting existing applications to the cloud has been broadly recognized as risky and sub-optimal. Such a transition tends to render applications less secure, inefficient, and costly without proper adaptation. This principle holds for programming languages and their ecosystems.

Currently, most cloud platform vendors accommodate mainstream programming languages like Python or TypeScript with minimal adjustments. While leveraging existing languages and their vast ecosystems has certain advantages—given it takes about a decade for a new programming language to gain significant traction—it's constrained by the limitations of third-party libraries and tools designed primarily for desktop or server environments, with perhaps a nod towards containerization.

Winglang is a new programming language pioneering a cloud-oriented paradigm that seeks to rethink the cloud software development stack from the ground up. My initial evaluations of Winglang's syntax, standard library, and toolchain were presented in two prior Medium publications:

- Hello, Winglang Hexagon!: Exploring Cloud Hexagonal Design with Winglang, TypeScript, and Ports & Adapters

- Implementing Production-grade CRUD REST API in Winglang: The First Steps

Capitalizing on this exploration, I will focus now on the higher-level infrastructure frameworks, often called 'Middleware'. Given its breadth and complexity, Middleware development cannot be comprehensively covered in a single publication. Thus, this publication is probably the beginning of a series where each part will be published as new materials are gathered, insights derived, or solutions uncovered.

Part One of the series, the current publication, will provide an overview of Middleware origins and discuss the current state of affairs, and possible directions for Winglang Middleware. The next publications will look at more specific aspects.

With Winglang being a rapidly evolving language, distinguishing the core language features from the third-party Middleware built atop this series will remain an unfolding narrative. Stay tuned.

Acknowledgments

Throughout the preparation of this publication, I utilized several key tools to enhance the draft and ensure its quality.

The initial draft was crafted with the organizational capabilities of Notion's free subscription, facilitating the structuring and development of ideas.

For grammar and spelling review, the free version of Grammarly proved useful for identifying and correcting basic errors, ensuring the readability of the text.

The enhancement of stylistic expression and the narrative coherence checks were performed using the paid version of ChatGPT 4.0.

I owe a special mention to Nick Gal’s informative blog post for illuminating the origins of the term "Middleware," helping to set the correct historical context of the whole discussion.

While these advanced tools and resources significantly contributed to the preparation process, the concepts, solutions, and final decisions presented in this article are entirely my own, for which I bear full responsibility.

What is Middleware?

The term "Middleware" passed a long way from its inception and formal definitions to its usage in day-to-day software development practices, particularly within web development.

Covering every nuance and variation of Middleware would be long a journey worthy of a comprehensive volume entitled “The History of Middleware”—a volume awaiting its author.

In this exploration, we aim to chart the principal course, distilling the essence of Middleware and its crucial role in filling the gap between basic-level infrastructure and the practical needs of cloud-based applications development.

Origins of Middleware

The concept of Middleware ||traces its roots back to an intriguing figure: the Russian-born British cartographer and cryptographer, Alexander d’Agapeyeff, at the "1968 NATO Software Engineering Conference."

Despite the scarcity of official information about d’Agapeyeff, his legacy extends beyond the enigmatic d’Agapeyeff Cipher, as he also played a pivotal role in the software industry as the founder and chairman of the "CAP Group." Insights into the early days of Middleware are illuminated by Brian Randell, a distinguished British computer scientist, in his recounting of "Some Middleware Beginnings."

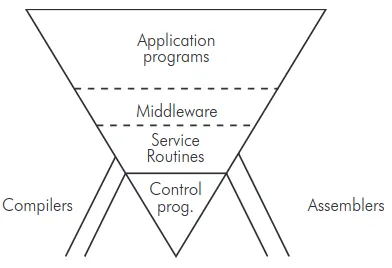

At the NATO Conference d’Agapeyeff introduced his Inverted Pyramid—a conceptual framework positioning Middleware as the critical layer bridging the gap between low-level infrastructure (such as Control Programs and Service Routines) and Application Programs:

Fig 1: Alexander d'Agapeyeff's Pyramid

Here is how A. d’Agapeyeff explains it:

An example of the kind of software system I am talking about is putting all the applications in a hospital on a computer, whereby you get a whole set of people to use the machine. This kind of system is very sensitive to weaknesses in the software, particular as regards the inability to maintain the system and to extend it freely.

This sensitivity of software can be understood if we liken it to what I will call the inverted pyramid... The buttresses are assemblers and compilers. They don’t help to maintain the thing, but if they fail you have a skew. At the bottom are the control programs, then the various service routines. Further up we have what I call middleware.

This is because no matter how good the manufacturer’s software for items like file handling it is just not suitable; it’s either inefficient or inappropriate. We usually have to rewrite the file handling processes, the initial message analysis and above all the real-time schedulers, because in this type of situation the application programs interact and the manufacturers, software tends to throw them off at the drop of a hat, which is somewhat embarrassing. On the top you have a whole chain of application programs.

The point about this pyramid is that it is terribly sensitive to change in the underlying software such that the new version does not contain the old as a subset. It becomes very expensive to maintain these systems and to extend them while keeping them live.

A. d'Agapeyeff emphasized the delicate balance within this pyramid, noting how sensitive it is to changes in the underlying software that do not preserve backward compatibility. He also warned against danger of over-generalized software too often unsuitable to any practical need:

In aiming at too many objectives the higher-level languages have, perhaps, proved to be useless to the layman, too complex for the novice and too restricted for the expert.

Despite improvements in general-purpose file handling and other advancements since d’Agapeyeff's time, the essence of his observations remains relevant.

There is still a big gap between low-level infrastructure, today encapsulated in an Operating System, like Linux, and the needs of final applications. The Operating System layer reflects and simplifies access to hardware capabilities, which are common for almost all applications.

Higher-level infrastructure needs, however, vary between different groups of applications: some prioritize minimizing the operational cost, some others - speed of development, and others - highly tightened security.

Different implementations of the Middleware layer are intended to fill up this gap and to provide domain-neutral services that are better tailored to the non-functional requirements of various groups of applications.

This consideration also explains why it’s always preferable to keep the core language, aka Winglang, and its standard library relatively small and stable, leaving more variability to be addressed by these intermediate Middleware layers.

Patterns, Frameworks, and Middleware

The middleware definition was refined in the “Patterns, Frameworks, and Middleware: Their Synergistic Relationships” paper, published in 2003 by Douglas C. Schmidt and Frank Buschmann. Here, they define middleware as:

software that can significantly increase reuse by providing readily usable, standard solutions to common programming tasks, such as persistent storage, (de)marshaling, message buffering and queueing, request demultiplexing, and concurrency control. Developers who use middleware can therefore focus primarily on application-oriented topics, such as business logic, rather than wrestling with tedious and error-prone details associated with programming infrastructure software using lower-level OS APIs and mechanisms.

To understand the interplay between Design Patterns, Frameworks and Middleware, let’s start with formal definitions derived from the “Patterns, Frameworks, and Middleware: Their Synergistic Relationships” paper Abstract:

Patterns codify reusable design expertise that provides time-proven solutions to commonly occurring software problems that arise in particular contexts and domains.

Frameworks provide both a reusable product-line architecture – guided by patterns – for a family of related applications and an integrated set of collaborating components that implement concrete realizations of the architecture.

Middleware is reusable software that leverages patterns and frameworks to bridge the gap between the functional requirements of applications and the underlying operating systems, network protocol stacks, and databases.

In other words, Middleware is implemented in the form of one or more Frameworks, which in turn apply several Design Patterns to achieve their goals including future extensibility. Exactly this combination, when implemented correctly, ensures Middleware's ability to flexibly address the infrastructure needs of large yet distinct groups of applications.

Let’s take a closer look at the definitions of each element presented above.

Design Patterns

In the realm of software engineering, a Software Design Pattern is understood as a generalized, reusable blueprint for addressing frequent challenges encountered in software design. As defined by Wikipedia:

In software engineering, a software design pattern is a general, reusable solution to a commonly occurring problem within a given context in software design. It is not a finished design that can be transformed directly into source or machine code. Rather, it is a description or template for how to solve a problem that can be used in many different situations. Design patterns are formalized best practices that the programmer can use to solve common problems when designing an application or system.

Sometimes, the term Architectural Pattern is used to distinguish high-level software architecture decisions from lower-level, implementation-oriented Design Patterns, as defined in Wikipedia:

An architectural pattern is a general, reusable resolution to a commonly occurring problem in software architecture within a given context. The architectural patterns address various issues in software engineering, such as computer hardware performance limitations, high availability and minimization of a business risk. Some architectural patterns have been implemented within software frameworks.

It is essential to differentiate Architectural and Design Patterns from their implementations in specific software projects. While an Architectural or Design Pattern provides an initial idea for solution, its implementation may involve a combination of several patterns, tailored to the unique requirements and nuances of the project at hand.

Architectural Patterns, such as Pipe-and-Filters, and Design Patterns, such as the Decorator, are not only about solving problems in code. They also serve as a common language among architects and developers, facilitating more straightforward communication about software structure and design choices. They are also invaluable tools for analyzing existing solutions, as we will see later.

Software Frameworks

In the domain of computer programming, a Software Framework represents a sophisticated form of abstraction, designed to standardize the development process by offering a reusable set of libraries or tools. As defined by Wikipedia:

In computer programming, a software framework is an abstraction in which software, providing generic functionality, can be selectively changed by additional user-written code, thus providing application-specific software.

It provides a standard way to build and deploy applications and is a universal, reusable software environment that provides particular functionality as part of a larger software platform to facilitate the development of software applications, products and solutions.

In other words, a Software Framework is an evolved Software Library that employs the principle of inversion of control. This means the framework, rather than the user's application, takes charge of the control flow. The application-specific code is then integrated through callbacks or plugins, which the framework's core logic invokes as needed.

Utilizing a Software Framework as the foundational layer for integrating domain-specific code with the underlying infrastructure allows developers to significantly decrease the development time and effort for complex software applications. Frameworks facilitate adherence to established coding standards and patterns, resulting in more maintainable, scalable, and secure code.

Nonetheless, it's crucial to follow the Clean Architecture guidelines, which mandate that domain-specific code remains decoupled and independent from any framework to preserve its ability to evolve independently of any infrastructure. Therefore, an ideal Software Framework should support plugging into it a pure domain code without any modification.

Middleware

The Middleware is defined by Wikipedia as follows:

Middleware is a type of computer software program that provides services to software applications beyond those available from the operating system. It can be described as "software glue".

Middleware in the context of distributed applications is software that provides services beyond those provided by the operating system to enable the various components of a distributed system to communicate and manage data. Middleware supports and simplifies complex distributed applications. It includes web servers, application servers, messaging and similar tools that support application development and delivery. Middleware is especially integral to modern information technology based on XML, SOAP, Web services, and service-oriented architecture.

Middleware, however, is not a monolithic entity but is rather composed of several distinct layers as we shall see in the next section.

Middleware Layers

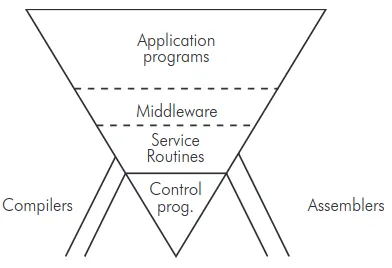

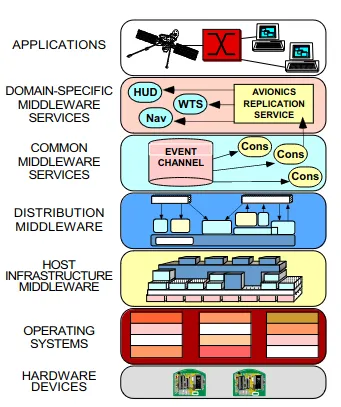

Below is an illustrative diagram portraying Middleware as a stack of such layers, each with its specialized function, as suggested in the Schmidt and Buchman paper:

Fig 2: Middleware Layers

Fig 2: Middleware Layers in Context

Layered Architecture Clarified

To appreciate the significance of this layered structure, a good understanding of the very concept of Layered Architecture is essential—a concept too often misunderstood completely and confused with the Multitier Architecture, deviating significantly from the original principles laid out by E.W. Dijkstra.

At the “1968 NATO Software Engineering Conference,” E.W. Dijkstra presented a paper titled “Complexity Controlled by Hierarchical Ordering of Function and Variability” where he stated:

We conceive an ordered sequence of machines: A[0], A[1], ... A[n], where A[0] is the given hardware machine and where the software of layer i transforms machine A[i] into A[i+1]. The software of layer i is defined in terms of machine A[i], it is to be executed by machine A[i], the software of layer i uses machine A[i] to make machine A[i+1].

In other words, in a correctly organized Layered Architecture, the higher-level virtual machine is implemented in terms of the lower-level virtual machine. Within this series, we will come back to this powerful technique over and over again.

Back to the Middleware Layers

Right beneath the Applications layer resides the Domain-Specific Middleware Services layer, a notion deserving a separate discussion within the broader framework of Domain-Driven Design.

Within this context, however, we are more interested in the Distribution Middleware layer, which serves as the intermediary between Host Infrastructure Middleware within a single "box" and the Common Middleware Services layer which operates across a distributed system's architecture.

As stated in the paper:

Common middleware services augment distribution middleware by defining higher-level domain-independent reusable services that allow application developers to concentrate on programming business logic.

With this understanding, we can now place Winglang Middleware within the Middleware Services layer enabling the implementation of Domain-Specific Middleware Services in terms of its primitives.

To complete the picture, we need more quotes from the “Patterns, Frameworks, and Middleware: Their Synergistic Relationships” article mapped onto the modern cloud infrastructure elements.

Host Infrastructure Middleware

Here is how it’s defined in the paper:

Host infrastructure middleware encapsulates and enhances native OS mechanisms to create reusable event demultiplexing, interprocess communication, concurrency, and synchronization objects, such as reactors; acceptors, connectors, and service handlers; monitor objects; active objects; and service configurators. By encapsulating the peculiarities of particular operating systems, these reusable objects help eliminate many tedious, error-prone, and non-portable aspects of developing and maintaining application software via low-level OS programming APIs, such as Sockets or POSIX pthreads.

In the AWS environment, general-purpose virtualization services such as AWS EC2 (computer), AWS VPC (network), and AWS EBS (storage) play this role.

On the other hand, when speaking about the AWS Lambda execution environment, we may identify AWS Firecracker, AWS Lambda standard and custom Runtimes, AWS Lambda Extensions, and AWS Lambda Layers as also belonging to this category.

Distribution Middleware

Here is how it’s defined in the paper:

Distribution middleware defines higher-level distributed programming models whose reusable APIs and objects automate and extend the native OS mechanisms encapsulated by host infrastructure middleware.

Distribution middleware enables clients to program applications by invoking operations on target objects without hard-coding dependencies on their location, programming language, OS platform, communication protocols and interconnects, and hardware.

Within the AWS environment, fully managed API, Storage, and Messaging services such as AWS API Gateway, AWS SQS, AWS SNS, AWS S3, and DynamoDB would fit naturally into this category.

Common Middleware Services

Here is how it’s defined in the paper:

Common middleware services augment distribution middleware by defining higher-level domain-independent reusable services that allow application developers to concentrate on programming business logic, without the need to write the “plumbing” code required to develop distributed applications via lower-level middleware directly.

For example, common middleware service providers bundle transactional behavior, security, and database connection pooling and threading into reusable components, so that application developers no longer need to write code that handles these tasks.

Whereas distribution middleware focuses largely on managing end-system resources in support of an object-oriented distributed programming model, common middleware services focus on allocating, scheduling, and coordinating various resources throughout a distributed system using a component programming and scripting model.

Developers can reuse these component services to manage global resources and perform common distribution tasks that would otherwise be implemented in an ad hoc manner within each application. The form and content of these services will continue to evolve as the requirements on the applications being constructed expand.

Formally speaking, Winglang, its Standard Library, and its Extended Libraries collectively constitute Common middleware services built on the top of the cloud platform Distribution Middleware and its corresponding lower-level Common middleware services represented by the cloud platform SDK for JavaScript and various Infrastructure as Code tools, such as AWS CDK or Terraform.

With Winglang Middleware we are looking for a higher level of abstraction built in terms of the core language and its library and facilitating the development of production-grade Domain-specific middleware services and applications on top of it.

Domain-Specific Middleware Services

Here is how it’s defined in the paper:

Domain-specific middleware services are tailored to the requirements of particular domains, such as telecom, e-commerce, health care, process automation, or aerospace. Unlike the other three middleware layers discussed above that provide broadly reusable “horizontal” mechanisms and services, domain-specific middleware services are targeted at “vertical” markets and product-line architectures. Since they embody knowledge of a domain, moreover, reusable domain-specific middleware services have the most potential to increase the quality and decrease the cycle-time and effort required to develop particular types of application software.

To sum up, the Winglang Middleware objective is to continue the trend of the Winglang compiler and its standard library to make developing Domain-specific middleware services less difficult.

Cloud Middleware State of Affairs

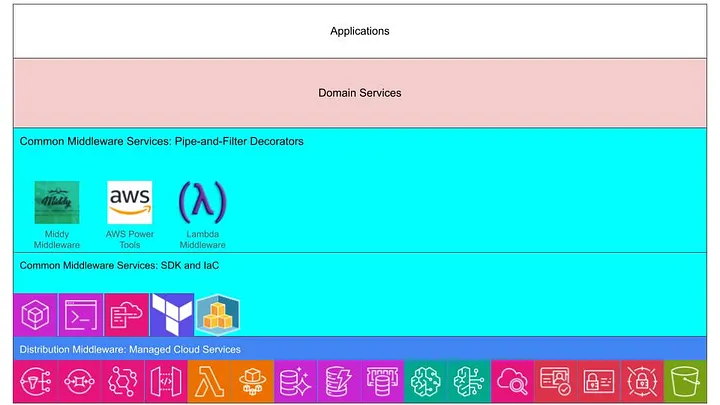

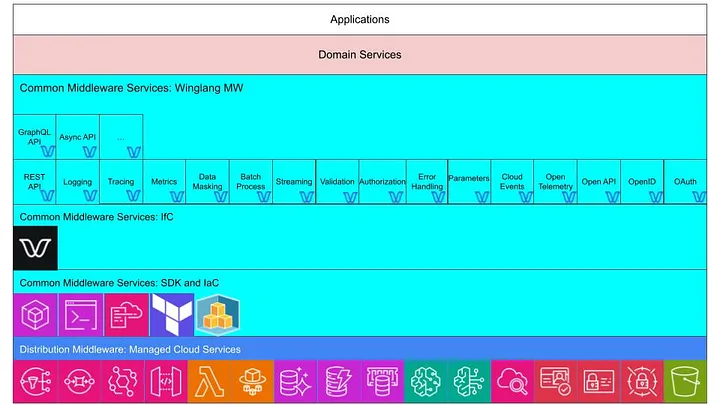

Applying the terminology introduced above, the current state of affairs with AWS cloud Middleware could be visualized as follows:

Fig 3: Cloud Middleware State of Affairs

We will look at three leading Middleware Frameworks for AWS:

- Middy (TypeScript)

- Power Tools for AWS Lambda (Python, TypeScript, Java, and .NET)

- Lambda Middleware

Middy

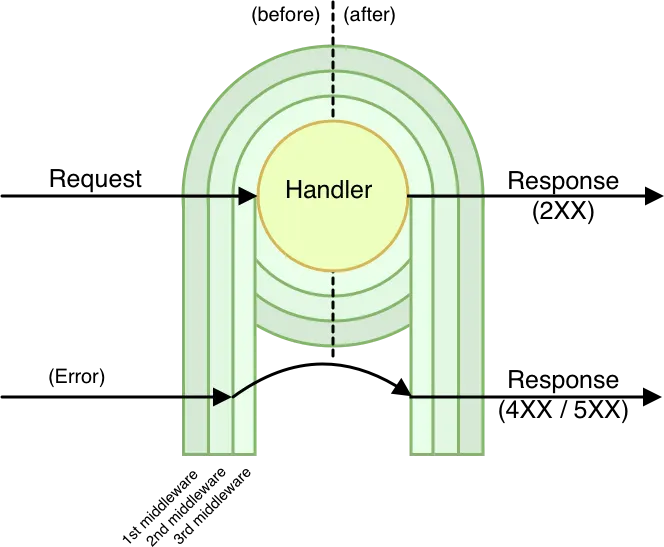

If we dive into the Middy Documentation we will find that it positions itself as a middleware engine, which is correct if we recall that very often Frameworks, which Middy is, are called Engines. However, it later claims that “… like generic web frameworks (fastify, hapi, express, etc.), this problem has been solved using the middleware pattern.” This is, as we understand now, complete nonsense. If we dive into the Middy Documentation further, we will find the following picture:

Fig 4: Middy

Now, we realize that what Middy calls “middleware” is a particular implementation of the Pipe-and-Filters Architecture Pattern via the Decorator Design Pattern. The latter should not be confused with TypeScript Decorators. In other words, Middy decorators are assembled into a pipeline each one performing certain operations before and/or after an HTTP request handling.

Perhaps, the main culprit of this confusion is the expressjs Framework Guide usage of titles like “Writing Middleware” and “Using Middleware” even though it internally uses the term middleware function, which is correct.

Middy comes with an impressive list of official middleware decorator plugins plus a long list of 3rd party middleware decorator plugins.

Power Tools for AWS Lambda

Here, the basic building blocks are called Features, which in many cases are Adapters of lower-level SDK functions. The list of features for different languages varies with the Python version to have the most comprehensive one. Features could be attached to Lambda Handlers using language decorators, used manually, or, in the case of TypeScript, using Middy. The term middleware pops up here and there and always means some decorator.

Lambda Middleware

This one is also an implementation of the Pipe-and-Filters Architecture Pattern via the Decorator Design Pattern. Unlike Middy, individual decorators are combined in a pipeline using a special Compose decorator effectively applying the Composite Design Pattern.

Limitations of existing solutions

Apart from using the incorrect terminology, all three frameworks have certain limitations in common, as follows:

The confusing sequence of operation of multiple Decorators. When more than one decorator is defined, the sequence of before operations is in the order of decorators, but the the sequence of after operations is in reverse order. With a long list of decorators that might be a source of serious confusion or even a conflict.

Reliance of environment variables. Control over the operation of particular adapters (e.g. Logger) solely relies on environment variables. To make a change, one will need to redeploy the Lambda Function.

A single list of decorators with some limited control in runtime. There is only one list of decorators per Lambda Function and, if some decorators need to be excluded and replaced depending on the deployment target or run-time environment, a run-time check needs to be performed (look, for example, at how Tracer behavior is controlled in Power Tools for AWS Lambda). This introduces unnecessary run-time overhead and enlarges the potential security attack surface.

Lack of support for higher-level crosscut specifications. All middleware decorators are specified for individual Lambda functions. Common specifications at the organization, organization unit, account, or service levels will require some handmade custom solutions.

Too narrow interpretation of Middleware as a linear implementation of Pipe-and-Filers and Decorator design patterns. Power Tools for AWS Lambda makes it slightly better by introducing its Features, also called Utilities, such as Logger, first and corresponding decorators second. Middy, on the other hand, treats everything as a decorator. In both cases, the decorators are stacked in one linear sequence, such that retrieving two parameters, one from the Secrets Manager and another from the AppConfig, cannot be performed in parallel while state-of-the-art pipeline builders, such as Marble.js and Async.js, support significantly more advanced control forms.

For Winglang Common Services Middleware Framework (we can now use the correct full name) this list of limitations will serve as a call for action to look for pragmatic ways to overcome these limitations.

Winglang Middleware Direction

Following the “Patterns, Frameworks, and Middleware: Their Synergistic Relationships” article middleware layers taxonomy, the Winglang Common Middleware Services Framework is positioned as follows:

Fig 5: Winglang Middlware Layer

In the diagram above, the Winglang Middleware Layer, code name Winglang MW, is positioned as an upper sub-layer of Common Middleware Services, built on the top of the Winglang as a representative of the Infrastructure-from-Code solution, which in turn is built on the top of the cloud-specific SDK and IaC solutions providing convenient access to the cloud Distribution Middleware.

From the feature set perspective, the Winglang MW is expected

- To be on par with leading middleware frameworks such

- Middy (TypeScript)

- Power Tools for AWS Lambda (Python, TypeScript, Java, and .NET)

- Lambda Middleware

- In addition, to provide support for leading open standards such as

- To provide built-in support for cross-cut middleware specifications at different levels above individual cloud functions

- To support run-time fine-tuning of individual feature parameters (e.g. logging level) without corresponding cloud resources redeployment

Different implementations of Winglang MW will vary in efficiency, ease of use (e.g. middleware pipeline configuration), flexibility, and supplementary tooling such as automatic code generation.

At the current stage, any premature conversion towards a single solution will be detrimental to the Winglang ecosystem evolution, and running multiple experiments in parallel would be more beneficial. Once certain middleware features prove themselves, they might be incorporated into the Winglang core, but it’s advisable not to rush too fast.

For the same reason, I intentionally called this section Directions rather than Requirements or Problem Statement. I have only a general sense of the desirable direction to proceed. Making things too specific could lead to some serendipitous alternatives being missed. I can, however, specify some constraints, as follows:

- Do not count on advanced Winglang features, such as Generics, to come. Generics may significantly complicate the language syntax and too often are introduced before a clear understanding of how much sophistication is required. Also, at the early stages of exploration, the lack of Generics support could be compensated by switching to a general-purpose data type, such as Json, or code generators, including the “C” macros.

- Stick with Winglang and switch to TypeScript for implementing low-level extensions only. As a new language, Winglang lacks features taken for granted in mainstream languages and therefore requires some faith to get a fair chance to write as much code as possible, even if it is slightly less convenient. This is the only way for a new programming language to evolve.

- If the development of CLI tools is required, prefer TypeScript over other languages such as Python. I already have the TypeScript toolchain installed on my desktop with all dependencies resolved. It’s always better to limit the number of moving parts in the system to the absolute minimum.

- Limit Winglang middleware implementation to a single process of a Cloud Function. Out-of-proc capabilities, such as AWS Lambda Extensions, can improve overall system performance, security, and reuse (see, for example, this blog post). However, they are not currently supported by Winglang out of the box. Also, utilizing such advanced capabilities will increase the system's complexity while contributing little, if any, at the semantic level. Exploring this direction can be postponed to later stages.

What’s Next?

This publication was completely devoted to clarifying the concept of Middleware, its position within the cloud software system stack, and defining a general direction for developing one or more Winglang Middleware Frameworks.

I plan to devote the next Part Two of this series to exploring different options for implementing the Pipe-and-Filters Pattern in Middleware and after that to start building individual utilities and corresponding filters one by one.

It’s a rare opportunity that one does not encounter every day to revise the generic software infrastructure elements from the first principles and to explore the most suitable ways of realizing these principles on the leading modern cloud platforms. If you are interested in taking part in this journey, drop me a line.

Inflight Magazine no. 8

The 8th issue of the Wing Inflight Magazine.

Implementing a Production-Grade CRUD REST API with Winglang

Abstract

This is an experience report on the initial steps of implementing a CRUD (Create, Read, Update, Delete) REST API in Winglang, with a focus on addressing typical production environment concerns such as secure authentication, observability, and error handling. It highlights how Winglang's distinctive features, particularly the separation of Preflight cloud resource configuration from Inflight API request handling, can facilitate more efficient integration of essential middleware components like logging and error reporting. This balance aims to reduce overall complexity and minimize the resulting code size. The applicability of various design patterns, including Pipe-and-Filters, Decorator, and Factory, is evaluated. Finally, future directions for developing a fully-fledged middleware library for Winglang are identified.

Introduction

In my previous publication, I reported on my findings about the possible implementation of the Hexagonal Ports and Adapters pattern in the Winglang programming language using the simplest possible GreetingService sample application. The main conclusions from this evaluation were:

- Cloud resources, such as API Gateway, play the role of drivers (in-) and driven (out-) Ports

- Event handling functions play the role of Adapters leading to a pure Core, that might be implemented in Winglang, TypeScript, and in fact in any programming language, that compiles into JavaScript and runs on the NodeJS runtime engine

Initially, I planned to proceed with exploring possible ways of implementing a more general Staged Event-Driven Architecture (SEDA) architecture in Winglang. However, using the simplest possible GreetingService as an example left some very important architectural questions unanswered. Therefore I decided to explore in more depth what is involved in implementing a typical Create/Retrieve/Update/Delete (CRUD) service exposing standardized REST API and addressing typical production environment concerns such as secure authentication, observability, error handling, and reporting.

To prevent domain-specific complexity from distorting the focus on important architectural considerations, I chose the simplest possible TODO service with four operations:

- Retrieve all Tasks (per user)

- Create a new Task

- Completely Replace an existing Task definition

- Delete an existing Task

Using this simple example allowed me to evaluate many important architectural options and to to come up with an initial prototype of a middleware library for the Winglang programming language compatible with and potentially surpassing popular libraries for mainstream programming languages, such as Middy for Node.js middleware engine for AWS Lambda and AWS Power Tools for Lambda.

Unlike my previous publication, I will not describe the step-by-step process of how I arrived at the current arrangement. Software architecture and design processes are rarely linear, especially beyond beginner-level tutorials. Instead, I will describe a starting point solution, which, while far from final, is representative enough to sense the direction in which the final framework might eventually evolve. I will outline the requirements, I wanted to address, the current architectural decisions, and highlight directions for future research.

Simple TODO in Winglang

Developing a simple, prototype-level TODO REST API service in Winglang is indeed very easy, and could be done within half an hour, using the Winglang Playground:

To keep things simple, I put everything in one source, even though, it of course could be split into Core, Ports, and Adapters. Let’s look at the major parts of this sample.

Resource (Ports) Definition

First, we need to define cloud resources, aka Ports, that we are going to use. This this is done as follows:

bring ex;

bring cloud;

let tasks = new ex.Table(

name: "Tasks",

columns: {

"id" => ex.ColumnType.STRING,

"title" => ex.ColumnType.STRING

},

primaryKey: "id"

);

let counter = new cloud.Counter();

let api = new cloud.Api();

let path = "/tasks";

Here we define a Winglang Table to keep TODO Tasks with only two columns: task ID and title. To keep things simple, we implement task ID as an auto-incrementing number using the Winglang Counter resource. And finally, we expose the TODO Service API using the Winglang Api resource.

API Request Handlers (Adapters)

Now, we are going to define a separate handler function for each of the four REST API requests. Getting a list of all tasks is implemented as:

api.get(

path,

inflight (request: cloud.ApiRequest): cloud.ApiResponse => {

let rows = tasks.list();

let var result = MutArray<Json>[];

for row in rows {

result.push(row);

}

return cloud.ApiResponse{

status: 200,

headers: {

"Content-Type" => "application/json"

},

body: Json.stringify(result)

};

});

Creating a new task record is implemented as:

api.post(

path,

inflight (request: cloud.ApiRequest): cloud.ApiResponse => {

let id = "{counter.inc()}";

if let task = Json.tryParse(request.body) {

let record = Json{

id: id,

title: task.get("title").asStr()

};

tasks.insert(id, record);

return cloud.ApiResponse {

status: 200,

headers: {

"Content-Type" => "application/json"

},

body: Json.stringify(record)

};

} else {

return cloud.ApiResponse {

status: 400,

headers: {

"Content-Type" => "text/plain"

},

body: "Bad Request"

};

}

});

Updating an existing task is implemented as:

api.put(

"{path}/:id",

inflight (request: cloud.ApiRequest): cloud.ApiResponse => {

let id = request.vars.get("id");

if let task = Json.tryParse(request.body) {

let record = Json{

id: id,

title: task.get("title").asStr()

};

tasks.update(id, record);

return cloud.ApiResponse {

status: 200,

headers: {

"Content-Type" => "application/json"

},

body: Json.stringify(record)

};

} else {

return cloud.ApiResponse {

status: 400,

headers: {

"Content-Type" => "text/plain"

},

body: "Bad Request"

};

}

});

Finally, deleting an existing task is implemented as:

api.delete(

"{path}/:id",

inflight (request: cloud.ApiRequest): cloud.ApiResponse => {

let id = request.vars.get("id");

tasks.delete(id);

return cloud.ApiResponse {

status: 200,

headers: {

"Content-Type" => "text/plain"

},

body: ""

};

});

We could play with this API using the Winglang Simulator:

We could write one or more tests to validate the API automatically:

bring http;

bring expect;

let url = "{api.url}{path}";

test "run simple crud scenario" {

let r1 = http.get(url);

expect.equal(r1.status, 200);

let r1_tasks = Json.parse(r1.body);

expect.nil(r1_tasks.tryGetAt(0));

let r2 = http.post(url, body: Json.stringify(Json{title: "First Task"}));

expect.equal(r2.status, 200);

let r2_task = Json.parse(r2.body);

expect.equal(r2_task.get("title").asStr(), "First Task");

let id = r2_task.get("id").asStr();

let r3 = http.put("{url}/{id}", body: Json.stringify(Json{title: "First Task Updated"}));

expect.equal(r3.status, 200);

let r3_task = Json.parse(r3.body);

expect.equal(r3_task.get("title").asStr(), "First Task Updated");

let r4 = http.delete("{url}/{id}");

expect.equal(r4.status, 200);

}

Last but not least, this service can be deployed on any supported cloud platform using the Winglang CLI. The code for the TODO Service is completely cloud-neutral, ensuring compatibility across different platforms without modification.

Should there be a need to expand the task details or link them to other system entities, the approach remains largely unaffected, provided the operations adhere to straightforward CRUD logic and can be executed within a 29-second timeout limit.

This example unequivocally demonstrates that the Winglang programming environment is a top-notch tool for the rapid development of such services. If this is all you need, you need not read further. What follows is a kind of White Rabbit hole of multiple non-functional concerns that need to be addressed before we can even start talking about serious production deployment.

You are warned. The forthcoming text is not for everybody, but rather for seasoned cloud software architects.

Usability

TODO sample service implementation presented above belongs to the so-called Headless REST API. This approach focuses on core functionality, leaving user experience design to separate layers. This is often implemented as Client-Side Rendering or Server Side Rendering with an intermediate Backend for Frontend tier, or by using multiple narrow-focused REST API services functioning as GraphQL Resolvers. Each approach has its merits for specific contexts.

I advocate for supporting HTTP Content Negotiation and providing a minimal UI for direct API interaction via a browser. While tools like Postman or Swagger can facilitate API interaction, experiencing the API as an end user offers invaluable insights. This basic UI, or what I refer to as an "engineering UI," often suffices.

In this context, anything beyond simple Server Side Rendering deployed alongside headless protocol serialization, such as JSON, might be unnecessarily complex. While Winglang provides support for Website cloud resource for web client assets (HTML pages, JavaScript, CSS), utilizing it for such purposes introduces additional complexity and cost.

A simpler solution would involve basic HTML templates, enhanced with HTMX's features and a CSS framework like Bootstrap. Currently, Winglang does not natively support HTML templates, but for basic use cases, this can be easily managed with TypeScript. For instance, rendering a single task line could be implemented as follows:

import { TaskData } from "core/task";

export function formatTask(path: string, task: TaskData): string {

return `

<li class="list-group-item d-flex justify-content-between align-items-center">

<form hx-put="${path}/${task.taskID}" hx-headers='{"Accept": "text/plain"}' id="${task.taskID}-form">

<span class="task-text">${task.title}</span>

<input

type="text"

name="title"

class="form-control edit-input"

style="display: none;"

value="${task.title}">

</form>

<div class="btn-group">

<button class="btn btn-danger btn-sm delete-btn"

hx-delete="${path}/${task.taskID}"

hx-target="closest li"

hx-swap="outerHTML"

hx-headers='{"Accept": "text/plain"}'>✕</button>

<button class="btn btn-primary btn-sm edit-btn">✎</button>

</div>

</li>

`;

}

That would result in the following UI screen:

Not super-fancy, but good enough for demo purposes.

Even purely Headless REST APIs require strong usability considerations. API calls should follow REST conventions for HTTP methods, URL formats, and payloads. Proper documentation of HTTP methods and potential error handling are crucial. Client and server errors need to be logged, converted into appropriate HTTP status codes, and accompanied by clear explanation messages in the response body.

The need to handle multiple request parsers and response formatters based on content negotiation using Content-Type and Accept headers in HTTP requests led me to the following design approach:

Adhering to the Dependency Inversion Principle ensures that the system Core is completely isolated from Ports and Adapters. While there might be an inclination to encapsulate the Core within a generic CRUD framework, defined by a ResourceData type, I advise caution. This recommendation stems from several considerations:

- In practice, even CRUD request processing often entails complexities that extend beyond basic operations.

- The Core should not rely on any specific framework, preserving its independence and adaptability.

- The creation of such a framework would necessitate support for Generic Programming, a feature not currently supported by Winglang.

Another option would be to abandon the Core data types definition and rely entirely on untyped JSON interfaces, akin to a Lisp-like programming style. However, given Winglang's strong typing, I decided against this approach.

Overall, the TodoServiceHandler is quite simple and easy to understand:

bring "./data.w" as data;

bring "./parser.w" as parser;

bring "./formatter.w" as formatter;

pub class TodoHandler {

_path: str;

_parser: parser.TodoParser;

_tasks: data.ITaskDataRepository;

_formatter: formatter.ITodoFormatter;

new(

path: str,

tasks_: data.ITaskDataRepository,

parser: parser.TodoParser,

formatter: formatter.ITodoFormatter,

) {

this._path = path;

this._tasks = tasks_;

this._parser = parser;

this._formatter = formatter;

}

pub inflight getHomePage(user: Json, outFormat: str): str {

let userData = this._parser.parseUserData(user);

return this._formatter.formatHomePage(outFormat, this._path, userData);

}

pub inflight getAllTasks(user: Json, query: Map<str>, outFormat: str): str {

let userData = this._parser.parseUserData(user);

let tasks = this._tasks.getTasks(userData.userID);

return this._formatter.formatTasks(outFormat, this._path, tasks);

}

pub inflight createTask(

user: Json,

body: str,

inFormat: str,

outFormat: str

): str {

let taskData = this._parser.parsePartialTaskData(user, body);

this._tasks.addTask(taskData);

return this._formatter.formatTasks(outFormat, this._path, [taskData]);

}

pub inflight replaceTask(

user: Json,

id: str,

body: str,

inFormat: str,

outFormat: str

): str {

let taskData = this._parser.parseFullTaskData(user, id, body);

this._tasks.replaceTask(taskData);

return taskData.title;

}

pub inflight deleteTask(user: Json, id: str): str {

let userData = this._parser.parseUserData(user);

this._tasks.deleteTask(userData.userID, num.fromStr(id));

return "";

}

}

As you might notice, the code structure deviates slightly from the design diagram presented earlier. These minor adaptations are normal in software design; new insights emerge throughout the process, necessitating adjustments. The most notable difference is the user: Json argument defined for every function. We'll discuss the purpose of this argument in the next section.

Security

Exposing the TODO service to the internet without security measures is a recipe for disaster. Hackers, bored teens, and professional attackers will quickly target its public IP address. The rule is very simple:

any public interface must be protected unless exposed for a very short testing period. Security is non-negotiable.

Conversely, overloading a service with every conceivable security measure can lead to prohibitively high operational costs. As I've argued in previous writings, making architects accountable for the costs of their designs might significantly reshape their approach:

If cloud solution architects were responsible for the costs incurred by their systems, it could fundamentally change their design philosophy.

What we need, is a reasonable protection of the service API, not less but not more either. Since I wanted to experiment with full-stack Service Side Rendering UI my natural choice was to enforce user login at the beginning, to produce a JWT Token with reasonable expiration, say one hour, and then to use it for authentication of all forthcoming HTTP requests.

Due to the Service Side Rendering rendering specifics using HTTP Cookie to carry over the session token was a natural (to be honest suggested by ChatGPT) choice. For the Client-Side Rendering option, I might need to use the Bearer Token delivered via the HTTP Request headers Authorization field.

With session tokens now incorporating user information, I could aggregate TODO tasks by the user. Although there are numerous methods to integrate session data, including user details into the domain, I chose to focus on userID and fullName attributes for this study.

For user authentication, several options are available, especially within the AWS ecosystem:

- AWS Cognito, utilizing its User Pools or integration with external Identity Providers like Google or Facebook.

- Third-party authentication services such as Auth0.

- A custom authentication service fully developed in Winglang.

- AWS Identity Center

- …

As an independent software technology researcher, I gravitate towards the simplest solutions with the fewest components, which also address daily operational needs. Leveraging the AWS Identity Center, as detailed in a separate publication, was a logical step due to my existing multi-account/multi-user setup.

After integration, my AWS Identity Center main screen looks like this:

That means that in my system, users, myself, or guests, could use the same AWS credentials for development, administration, and sample or housekeeping applications.

To integrate with AWS Identity Center I needed to register my application and provide a new endpoint implementing the so-called “Assertion Consumer Service URL (ACS URL)”. This publication is not about the SAML standard. It would suffice to say that with ChatGPT and Google search assistance, it could be done. Some useful information can be found here. What came very handy was a TypeScript samlify library which encapsulates the whole heavy lifting of the SAML Login Response validation process.

What I’m mostly interested in is how this variability point affects the overall system design. Let’s try to visualize it using a semi-formal data flow notation:

While it might seem unusual this representation reflects with high fidelity how data flows through the system. What we see here is a special instance of the famous Pipe-and-Filters architectural pattern.

Here, data flows through a pipeline and each filter performs one well-defined task in fact following the Single Responsibility Principle. Such an arrangement allows me to replace filters should I want to switch to a simple Basic HTTP Authentication, to use the HTTP Authorization header, or use a different secret management policy for JWT token building and validation.

If we zoom into Parse and Format filters, we will see a typical dispatch logic using Content-Type and Accept HTTP headers respectively:

Many engineers confuse design and architectural patterns with specific implementations. This misses the essence of what patterns are meant to achieve.

Patterns are about identifying a suitable approach to balance conflicting forces with minimal intervention. In the context of building cloud-based software systems, where security is paramount but should not be overpriced in terms of cost or complexity, this understanding is crucial. The Pipe-and-Filters design pattern helps with addressing such design challenges effectively. It allows for modularization and flexible configuration of processing steps, which in this case, relate to authentication mechanisms.

For instance, while robust security measures like SAML authentication are necessary for production environments, they may introduce unnecessary complexity and overhead in scenarios such as automated end-to-end testing. Here, simpler methods like Basic HTTP Authentication may suffice, providing a quick and cost-effective solution without compromising the system's overall integrity. The goal is to maintain the system's core functionality and code base uniformity while varying the authentication strategy based on the environment or specific requirements.

Winglang's unique Preflight compilation feature facilitates this by allowing for configuration adjustments at the build stage, eliminating runtime overhead. This capability presents a significant advantage of Winglang-based solutions over other middleware libraries, such as Middy and AWS Power Tools for Lambda, by offering a more efficient and flexible approach to managing the authentication pipeline.

Implementing Basic HTTP Authentication, therefore, only requires modifying a single filter within the authentication pipeline, leaving the remainder of the system unchanged:

Due to some technical limitations, it’s currently not possible to implement Pipe-and-Filters in Winglang directly, but it could be quite easily simulated by a combination of Decorator and Factory design patterns. How exactly, we will see shortly. Now, let’s proceed to the next topic.

Operation

In this publication, I’m not going to cover all aspects of production operation. The topic is large and deserves a separate publication of its own. Below, is presented what I consider as a bare minimum:

To operate a service we need to know what happens with it, especially when something goes wrong. This is achieved via a Structured Logging mechanism. At the moment, Winglang provides only a basic log(str) function. For my investigation, I need more and implemented a poor man-structured logging class

// A poor man implementation of configurable Logger

// Similar to that of Python and TypeScript

bring cloud;

bring "./dateTime.w" as dateTime;

pub enum logging {

TRACE,

DEBUG,

INFO,

WARNING,

ERROR,

FATAL

}

//This is just enough configuration

//A serious review including compliance

//with OpenTelemetry and privacy regulations

//Is required. The main insight:

//Serverless Cloud logging is substantially

//different

pub interface ILoggingStrategy {

inflight timestamp(): str;

inflight print(message: Json): void;

}

pub class DefaultLoggerStrategy impl ILoggingStrategy {

pub inflight timestamp(): str {

return dateTime.DateTime.toUtcString(std.Datetime.utcNow());

}

pub inflight print(message: Json): void {

log("{message}");

}

}

//TBD: probably should go into a separate module

bring expect;

bring ex;

pub class MockLoggerStrategy impl ILoggingStrategy {

_name: str;

_counter: cloud.Counter;

_messages: ex.Table;

new(name: str?) {

this._name = name ?? "MockLogger";

this._counter = new cloud.Counter();

this._messages = new ex.Table(

name: "{this._name}Messages",

columns: Map<ex.ColumnType>{

"id" => ex.ColumnType.STRING,

"message" => ex.ColumnType.STRING

},

primaryKey: "id"

);

}

pub inflight timestamp(): str {

return "{this._counter.inc(1, this._name)}";

}

pub inflight expect(messages: Array<Json>): void {

for message in messages {

this._messages.insert(

message.get("timestamp").asStr(),

Json{ message: "{message}"}

);

}

}

pub inflight print(message: Json): void {

let expected = this._messages.get(

message.get("timestamp").asStr()

).get("message").asStr();

expect.equal("{message}", expected);

}

}

pub class Logger {

_labels: Array<str>;

_levels: Array<logging>;

_level: num;

_service: str;

_strategy: ILoggingStrategy;

new (level: logging, service: str, strategy: ILoggingStrategy?) {

this._labels = [

"TRACE",

"DEBUG",

"INFO",

"WARNING",

"ERROR",

"FATAL"

];

this._levels = Array<logging>[

logging.TRACE,

logging.DEBUG,

logging.INFO,

logging.WARNING,

logging.ERROR,

logging.FATAL

];

this._level = this._levels.indexOf(level);

this._service = service;

this._strategy = strategy ?? new DefaultLoggerStrategy();

}

pub inflight log(level_: logging, func: str, message: Json): void {

let level = this._levels.indexOf(level_);

let label = this._labels.at(level);

if this._level <= level {

this._strategy.print(Json {

timestamp: this._strategy.timestamp(),

level: label,

service: this._service,

function: func,

message: message

});

}

}

pub inflight trace(func: str, message: Json): void {

this.log(logging.TRACE, func,message);

}

pub inflight debug(func: str, message: Json): void {

this.log(logging.DEBUG, func, message);

}

pub inflight info(func: str, message: Json): void {

this.log(logging.INFO, func, message);

}

pub inflight warning(func: str, message: Json): void {

this.log(logging.WARNING, func, message);

}

pub inflight error(func: str, message: Json): void {

this.log(logging.ERROR, func, message);

}

pub inflight fatal(func: str, message: Json): void {

this.log(logging.FATAL, func, message);

}

}

There is nothing spectacular here and, as I wrote in the comments, a cloud-based logging system requires a serious revision. Still, it’s enough for the current investigation. I’m fully convinced that logging is an integral part of any service specification and has to be tested with the same rigor as core functionality. For that purpose, I developed a simple mechanism to mock logs and check them against expectations.

For a REST API CRUD service, we need to log at least three types of things:

- HTTP Request

- Original Error message if something wrong happened

- HTTP Response

In addition, depending on needs the original error message might need to be converted into a standard one, for example in order not to educate attackers.

How much if any details to log depends on multiple factors: deployment target, type of request, specific user, type of error, statistical sampling, etc. In development and test mode, we will normally opt for logging almost everything and returning the original error message directly to the client screen to ease debugging. In production mode, we might opt for removing some sensitive data because of regulation requirements, to return a general error message, such as “Bad Request”, without any details, and apply only statistical sample logging for particular types of requests to save the cost.

Flexible logging configuration was achieved by injecting four additional filters in every request handling pipeline:

- HTTP Request logging filter

- Try/Catch Decorator to convert exceptions if any into HTTP status codes and to log original error messages (this could be extracted into a separate filter, but I decided to keep things simple)

- Error message translator to convert original error messages into standard ones if required

- HTTP Response logging filter

This structure, although not an ultimate one, provides enough flexibility to implement a wide range of logging and error-handling strategies depending on the service and its deployment target specifics.

As with logs, Winglang at the moment provides only a basic throw <str> operator, so I decided to implement my version of a poor man structured exceptions:

// A poor man structured exceptions

pub inflight class Exception {

pub tag: str;

pub message: str?;

new(tag: str, message: str?) {

this.tag = tag;

this.message = message;

}

pub raise() {

let err = Json.stringify(this);

throw err;

}

pub static fromJson(err: str): Exception {

let je = Json.parse(err);

return new Exception(

je.get("tag").asStr(),

je.tryGet("message")?.tryAsStr()

);

}

pub toJson(): Json { //for logging

return Json{tag: this.tag, message: this.message};

}

}

// Standard exceptions, similar to those of Python

pub inflight class KeyError extends Exception {

new(message: str?) {

super("KeyError", message);

}

}

pub inflight class ValueError extends Exception {

new(message: str?) {

super("ValueError", message);

}

}

pub inflight class InternalError extends Exception {

new(message: str?) {

super("InternalError", message);

}

}

pub inflight class NotImplementedError extends Exception {

new(message: str?) {

super("NotImplementedError", message);

}

}

//Two more HTTP-specific, yet useful

pub inflight class AuthenticationError extends Exception {

//aka HTTP 401 Unauthorized

new(message: str?) {

super("AuthenticationError", message);

}

}

pub inflight class AuthorizationError extends Exception {

//aka HTTP 403 Forbidden

new(message: str?) {

super("AuthorizationError", message);

}

}

These experiences highlight how the developer community can bridge gaps in new languages with temporary workarounds. Winglang is still evolving, but its innovative features can be harnessed for progress despite the language's age.

Now, it’s time to take a brief look at the last production topic on my list, namely

Scale

Scaling is a crucial aspect of cloud development, but it's often misunderstood. Some neglect it entirely, leading to problems when the system grows. Others over-engineer, aiming to be a "FANG" system from day one. The proclamation "We run everything on Kubernetes" is a common refrain in technical circles, regardless of whether it's appropriate for the project at hand.

Neither—neglect nor over-engineering— extreme is ideal. Like security, scaling shouldn't be ignored, but it also shouldn't be over-emphasized.

Up to a certain point, cloud platforms provide cost-effective scaling mechanisms. Often, the choice between different options boils down to personal preference or inertia rather than significant technical advantages.

The prudent path involves starting small and cost-effectively, scaling out based on real-world usage and performance data, rather than assumptions. This approach necessitates a system designed for easy configuration changes to accommodate scaling, something not inherently supported by Winglang but certainly within the realm of feasibility through further development and research. As an illustration, let's consider scaling within the AWS ecosystem:

- Initially, a cost-effective and swift deployment might involve a single Lambda Function URL for a full-stack CRUD API with Server-Side Rendering, using an S3 Bucket for storage. This setup enables rapid feedback essential for early development stages. Personally, I favor a "UX First" approach over "API First." You might be surprised how far you can get with this basic technology stack. While Winglang doesn't currently support Lambda Function URLs, I believe it could be achieved with filter combinations and system adjustments. At this level, following Marc Van Neerven's recommendation to use standard Web Components instead of heavy frameworks could be beneficial. This is a subject for future exploration.

- Transitioning to an API Gateway or GraphQL Gateway becomes relevant when external API exposure or advanced features like WebSockets are required. If the initial data storage solution becomes a bottleneck, it might be time to consider switching to a more robust and scalable solution like DynamoDB. At this point, deploying separate Lambda Functions for each API request might offer simplicity in implementation, though it's not always the most cost-effective strategy.

- The move to containerized solutions should be data-driven, considered only when there's clear evidence that the function-based architecture is either too costly or suffers from latency issues due to cold starts. An initial foray into containers might involve using ECS Fargate for its simplicity and cost-effectiveness, reserving EKS for scenarios with specific operational needs that require its capabilities. This evolution should ideally be managed through configuration adjustments and automated filter generation, leveraging Winglang's unique capabilities to support dynamic scaling strategies.

In essence, Winglang's approach, emphasizing the Preflight and Inflight stages, holds promise for facilitating these scaling strategies, although it may still be in the early stages of fully realizing this potential. This exploration of scalability within cloud software development emphasizes starting small, basing decisions on actual data, and remaining flexible in adapting to changing requirements.

Concluding Remarks

In the mid-1990s, I learned about Commonality Variability Analysis from Jim Coplien. Since then, this approach, alongside Edsger W. Dijkstra's Layered Architecture, has been a cornerstone of my software engineering practices. Commonality Variability Analysis asks: "In our system, which parts will always be the same and which might need to change?" The Open-Closed Principle dictates that variable parts should be replaceable without modifying the core system.

Deciding when to finalize the stable aspects of a system involves navigating the trade-off between flexibility and efficiency, with several stages from code generation to runtime offering opportunities for fixation. Dynamic language proponents might delay these decisions to runtime for maximum flexibility, whereas advocates for static, compiled languages typically secure crucial system components as early as possible.

Winglang, with its unique Preflight compilation phase, stands out by allowing cloud resources to be fixed early in the development process. In this publication, I explored how Winglang enables addressing non-functional aspects of cloud services through a flexible pipeline of filters, though this granularity introduces its own complexity. The challenge now becomes managing this complexity without compromising the system's efficiency or flexibility.

While the final solution is a work in progress, I can outline a high-level design that balances these forces:

This design combines several software Design Patterns to achieve the desired balance. The process involves:

- The Pipeline Builder component is responsible for preparing a final set of Preflight components.

- The Pipeline Builder reads a Configuration which might be organized as a Composite (think team-wide or organization-wide configuration).

- Configurations specify capability requirements for resources (e.g., loggers).

- Each Resource has several Specifications each one defining conditions under which a Factory needs to be invoked to produce the required Filter. Three filter types are envisioned:

- Row HTTP Request/Response Filter

- Extended HTTP Request/Response Filter with session information extracted after token validation

- Generic CRUD requests filter to be forwarded to Core

This approach shifts complexity towards implementing the Pipeline Builder machinery and Configuration specification. Experience teaches such machinery could be implemented (described for example in this publication). That normally requires some generic programming and dynamic import capabilities. Coming up with a good configuration data model is more challenging.

Recent advances in generative AI-based copilots raise new questions about achieving the most cost-efficient outcome. To understand the problem, let's revisit the traditional compilation and configuration stack:

This general case may not apply to every ecosystem. Here's a breakdown of the typical layers:

- The Core Language is designed to be small (”C” and Lisp tradition). It may or may not provide support for Reflection.

- As many as possible extended capabilities are provided by Standard Library and 3rd Party Libraries and Frameworks.

- Generic Meta-Programming: Support for features like C++ templates or Lisp macros is introduced early (C++, Rust) or later (Java, C#). Generics are a source of ongoing debate:

- Framework developers find them insufficiently expressive.

- Application developers struggle with their complexity.

- Scala exemplifies the potential downsides of overly complex generics.

- Despite criticism, macros (e.g., C preprocessor) persist as a tool for automated code generation, often compensating for generic limitations.

- Third-party vendors (often open-source) provide solutions that enhance or compensate for the standard library, typically using external configuration files (YAML, JSON, etc.).

- Specialized generators very often use external blueprints or templates.

This complex structure has limitations. Generics can obscure the core language, macros are unsafe, configuration files are poorly disguised scripts, and code generators rely on inflexible static templates. These limitations are why I believe the current trend of Internal Development Platforms has limited growth potential.

As we look forward to the role of generative AI in streamlining these processes, the question becomes: Can generative AI-based copilots not only simplify but also enhance our ability to balance commonality and variability in software engineering?

This is going to be the main topic of my future research to be reported in the next publications. Stay tuned.

5 Ways to Skin a Lambda Function: A DevTools Comparison Guide

TL;DR

As the saying goes, there are several ways to skin a cat...in the tech world, there are 5 ways to skin a Lambda Function 🤩

Lets Compare 5 DevTools

Introduction

As developers try to bridge the gap between development and DevOps, I thought it would be helpful to compare Programming Languages and DevTools.

Let's start with the idea of a simple function that would upload a text file to a Bucket in our cloud app.

The next step is to demonstrate several ways this could be accomplished.

Note: In cloud development, managing permissions and bucket identities, packaging runtime code, and handling multiple files for infrastructure and runtime add layers of complexity to the development process.

Let's dive into some code!

1. Wing

After installing Wing, let's create a file:

main.w

If you aren't familiar with the Wing Programming Language, please check out the open-source repo HERE

bring cloud;

let bucket = new cloud.Bucket();

new cloud.Function(inflight () => {

bucket.put("hello.txt", "world!");

});

Let's do a breakdown of what's happening in the code above.

bring cloudis Wing's import syntax

Create a Cloud Bucket:

let bucket = new cloud.Bucket();initializes a new cloud bucket instance.

On the backend, the Wing platform provisions a new bucket in your cloud provider's environment. This bucket is used for storing and retrieving data.

Create a Cloud Function: The

new cloud.Function(inflight () => { ... });statement defines a new cloud function.

This function, when triggered, performs the actions defined within its body.

bucket.put("hello.txt", "world!");uploads a file named hello.txt with the content world! to the cloud bucket created earlier.

Compile & Deploy to AWS

wing compile --platform tf-aws main.wterraform apply

That's it, Wing takes care of the complexity of (permissions, getting the bucket identity in the runtime code, packaging the runtime code into a bucket, having to write multiple files - for infrastructure and runtime), etc.

Not to mention it generates IAC (TF or CF), plus Javascript that you can deploy with existing tools.

But while you develop, you can use the local simulator to get instant feedback and shorten the iteration cycles

Wing even has a playground that you can try out in the browser!

2. Pulumi

Step 1: Initialize a New Pulumi Project

mkdir pulumi-s3-lambda-ts

cd pulumi-s3-lambda-ts

pulumi new aws-typescript

Step 2. Write the code to upload a text file to S3.

This will be your project structure.

pulumi-s3-lambda-ts/

├─ src/

│ ├─ index.ts # Pulumi infrastructure code

│ └─ lambda/

│ └─ index.ts # Lambda function code to upload a file to S3

├─ tsconfig.json # TypeScript configuration

└─ package.json # Node.js project file with dependencies

Let's add this code to index.ts

import * as pulumi from "@pulumi/pulumi";

import * as aws from "@pulumi/aws";

// Create an AWS S3 bucket

const bucket = new aws.s3.Bucket("myBucket", {

acl: "private",

});

// IAM role for the Lambda function

const lambdaRole = new aws.iam.Role("lambdaRole", {

assumeRolePolicy: JSON.stringify({

Version: "2023-10-17",

Statement: [

{

Action: "sts:AssumeRole",

Principal: {

Service: "lambda.amazonaws.com",

},

Effect: "Allow",

Sid: "",

},

],

}),

});

// Attach the AWSLambdaBasicExecutionRole policy

new aws.iam.RolePolicyAttachment("lambdaExecutionRole", {

role: lambdaRole,

policyArn: aws.iam.ManagedPolicy.AWSLambdaBasicExecutionRole,

});

// Policy to allow Lambda function to access the S3 bucket

const lambdaS3Policy = new aws.iam.Policy("lambdaS3Policy", {

policy: bucket.arn.apply((arn) =>

JSON.stringify({

Version: "2023-10-17",

Statement: [

{

Action: ["s3:PutObject", "s3:GetObject"],

Resource: `${arn}/*`,

Effect: "Allow",

},

],

})

),

});

// Attach policy to Lambda role

new aws.iam.RolePolicyAttachment("lambdaS3PolicyAttachment", {

role: lambdaRole,

policyArn: lambdaS3Policy.arn,

});

// Lambda function

const lambda = new aws.lambda.Function("myLambda", {

code: new pulumi.asset.AssetArchive({

".": new pulumi.asset.FileArchive("./src/lambda"),

}),

runtime: aws.lambda.Runtime.NodeJS12dX,

role: lambdaRole.arn,

handler: "index.handler",

environment: {

variables: {

BUCKET_NAME: bucket.bucket,

},

},

});

export const bucketName = bucket.id;

export const lambdaArn = lambda.arn;

Next, create a lambda/index.ts directory for the Lambda function code:

import { S3 } from "aws-sdk";

const s3 = new S3();

export const handler = async (): Promise<void> => {

const bucketName = process.env.BUCKET_NAME || "";

const fileName = "example.txt";

const content = "Hello, Pulumi!";

const params = {

Bucket: bucketName,

Key: fileName,

Body: content,

};

try {

await s3.putObject(params).promise();

console.log(

`File uploaded successfully at https://${bucketName}.s3.amazonaws.com/${fileName}`

);

} catch (err) {

console.log(err);

}

};

Step 3: TypeScript Configuration (tsconfig.json)

{

"compilerOptions": {

"target": "ES2018",

"module": "CommonJS",

"strict": true,

"esModuleInterop": true,

"skipLibCheck": true,

"forceConsistentCasingInFileNames": true

},

"include": ["src/**/*.ts"],

"exclude": ["node_modules", "**/*.spec.ts"]

}

After creating a Pulumi project, a yaml file will automatically be generated. pulumi.yaml

name: s3-lambda-pulumi

runtime: nodejs

description: A simple example that uploads a file to an S3 bucket using a Lambda function

template:

config:

aws:region:

description: The AWS region to deploy into

default: us-west-2

Deploy with Pulumi

Ensure your lambda directory with the index.js file is correctly set up. Then, run the following command to deploy your infrastructure: pulumi up

3. AWS-CDK

Step 1: Initialize a New CDK Project

mkdir cdk-s3-lambda

cd cdk-s3-lambda

cdk init app --language=typescript

Step 2: Add Dependencies

npm install @aws-cdk/aws-lambda @aws-cdk/aws-s3

Step 3: Define the AWS Resources in CDK

File: index.js

import * as cdk from "@aws-cdk/core";

import * as lambda from "@aws-cdk/aws-lambda";

import * as s3 from "@aws-cdk/aws-s3";

export class CdkS3LambdaStack extends cdk.Stack {

constructor(scope: cdk.Construct, id: string, props?: cdk.StackProps) {

super(scope, id, props);

// Create the S3 bucket

const bucket = new s3.Bucket(this, "MyBucket", {

removalPolicy: cdk.RemovalPolicy.DESTROY, // NOT recommended for production code

});

// Define the Lambda function

const lambdaFunction = new lambda.Function(this, "MyLambda", {

runtime: lambda.Runtime.NODEJS_14_X, // Define the runtime

handler: "index.handler", // Specifies the entry point

code: lambda.Code.fromAsset("lambda"), // Directory containing your Lambda code

environment: {

BUCKET_NAME: bucket.bucketName,

},

});

// Grant the Lambda function permissions to write to the S3 bucket

bucket.grantWrite(lambdaFunction);

}

}

Step 4: Lambda Function Code

Create the same file struct as above and in the pulumi directory: index.ts

import { S3 } from 'aws-sdk';

const s3 = new S3();

exports.handler = async (event: any) => {

const bucketName = process.env.BUCKET_NAME;

const fileName = 'uploaded_file.txt';

const content = 'Hello, CDK! This file was uploaded by a Lambda function!';

try {

const result = await s3.putObject({

Bucket: bucketName!,

Key: fileName,

Body: content,

}).promise();

console.log(`File uploaded successfully: ${result}`);

return {

statusCode: 200,

body: `File uploaded successfully: ${fileName}`,

};

} catch (error) {

console.log(error);

return {

statusCode: 500,

body: `Failed to upload file: ${error}`,

};

}

};

Deploy the CDK Stack

First, compile your TypeScript code: npm run build, then

Deploy your CDK to AWS: cdk deploy

4. CDK for Terraform

Step 1: Initialize a New CDKTF Project

mkdir cdktf-s3-lambda-ts

cd cdktf-s3-lambda-ts

Then, initialize a new CDKTF project using TypeScript:

cdktf init --template="typescript" --local

Step 2: Install AWS Provider and Add Dependencies

npm install @cdktf/provider-aws

Step 3: Define the Infrastructure

Edit main.ts to define the S3 bucket and Lambda function:

import { Construct } from "constructs";

import { App, TerraformStack } from "cdktf";

import { AwsProvider, s3, lambdafunction, iam } from "@cdktf/provider-aws";

class MyStack extends TerraformStack {

constructor(scope: Construct, id: string) {

super(scope, id);

new AwsProvider(this, "aws", { region: "us-west-2" });

// S3 bucket

const bucket = new s3.S3Bucket(this, "lambdaBucket", {

bucketPrefix: "cdktf-lambda-",

});

// IAM role for Lambda

const role = new iam.IamRole(this, "lambdaRole", {

name: "lambda_execution_role",

assumeRolePolicy: JSON.stringify({

Version: "2023-10-17",

Statement: [